1

Feature Story

Microsoft AI Researchers Expose 38TB of Data, Including Keys, Passwords and Internal Messages

Sep 19, 2023 · news.bensbites.co

Microsoft's security response team invalidated the token within two days of Wiz's disclosure in June and replaced it on GitHub a month later. The tech giant has since published a blog post explaining the data leak and how to prevent similar incidents. Microsoft assured that no customer data was exposed and no other internal services were put at risk due to this issue.

Key takeaways

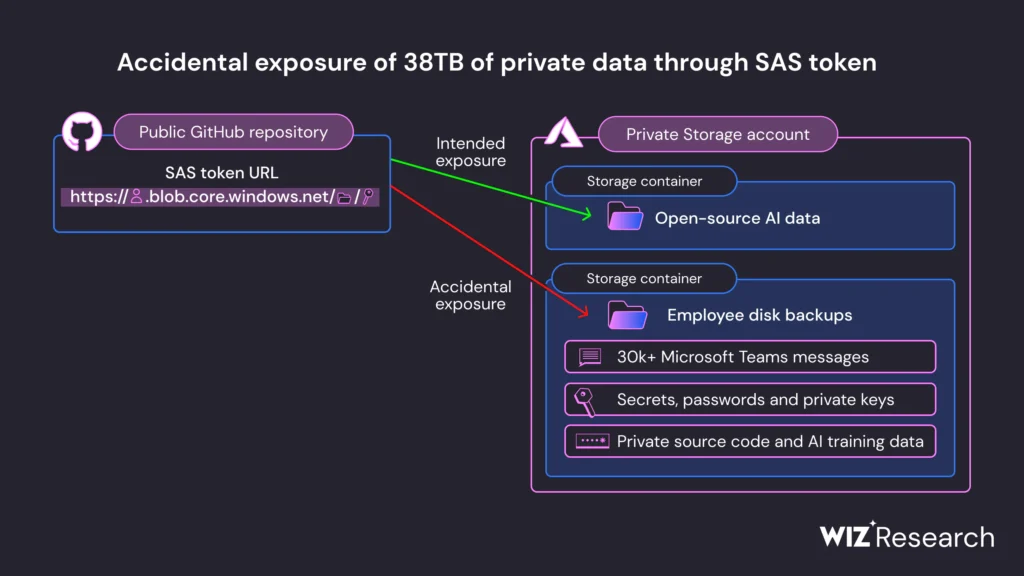

- Microsoft exposed 38 terabytes of private data during a routine open source AI training material update on GitHub, including a disk backup of two employees’ workstations, corporate secrets, private keys, passwords, and over 30,000 internal Microsoft Teams messages.

- The security misstep was discovered by Wiz, a cloud data security startup founded by ex-Microsoft software engineers, during routine internet scans for misconfigured storage containers.

- Microsoft used an Azure feature called SAS tokens for data sharing, but the link was misconfigured to share the entire storage account, including private files. The token was also misconfigured to allow “full control” permissions instead of read-only, potentially allowing attackers to delete and overwrite existing files.

- Microsoft’s security response team invalidated the SAS token within two days of initial disclosure in June this year, and the token was replaced on GitHub a month later. Microsoft has assured that no customer data was exposed, and no other internal services were put at risk because of this issue.