1

Feature Story

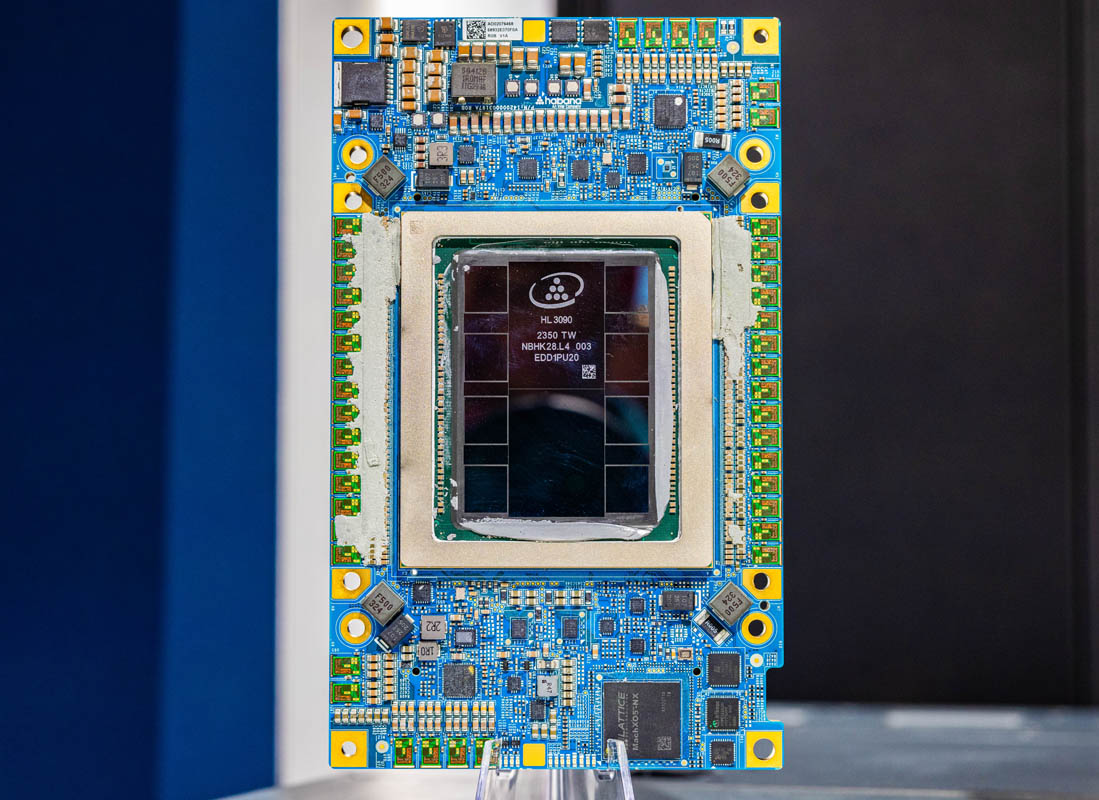

This is Intel Gaudi 3 the New 128GB HBM2e AI Chip in the Wild

Apr 22, 2024 · servethehome.com

Intel claims that the Gaudi 3 is more power-efficient and sometimes faster than the NVIDIA H100 in inferencing, making it a strong contender in the AI inference market. It also offers faster training than the NVIDIA H100. The Gaudi 3 is set to go into volume production later in 2024, with air-cooled and liquid-cooled variants available. The company plans to offer the Gaudi 3 at a substantially lower cost than NVIDIA's offerings.

Key takeaways

- The Intel Gaudi 3 is a new AI accelerator that is set to go into volume production in 2024. It is a significant improvement over the previous generation Gaudi 2, with more memory, more compute, and faster interconnect.

- The Gaudi 3 uses Ethernet to scale up and out, with 24 network interfaces that are 200GbE up from 100GbE in Gaudi 2. This allows for scaling between AI accelerators in a chassis and across multiple AI accelerators in a data center.

- Intel claims that the Gaudi 3 is more power efficient and sometimes faster than the NVIDIA H100 in inferencing. It is expected to find its place in the AI inference market.

- The Gaudi 3 will be available in air-cooled and liquid-cooled variants, with production set to begin in the second half of 2024. It is expected to be offered at a substantially lower cost than NVIDIA's offerings.