1

Feature Story

Will scaling work?

Dec 27, 2023 · dwarkeshpatel.com

Key takeaways

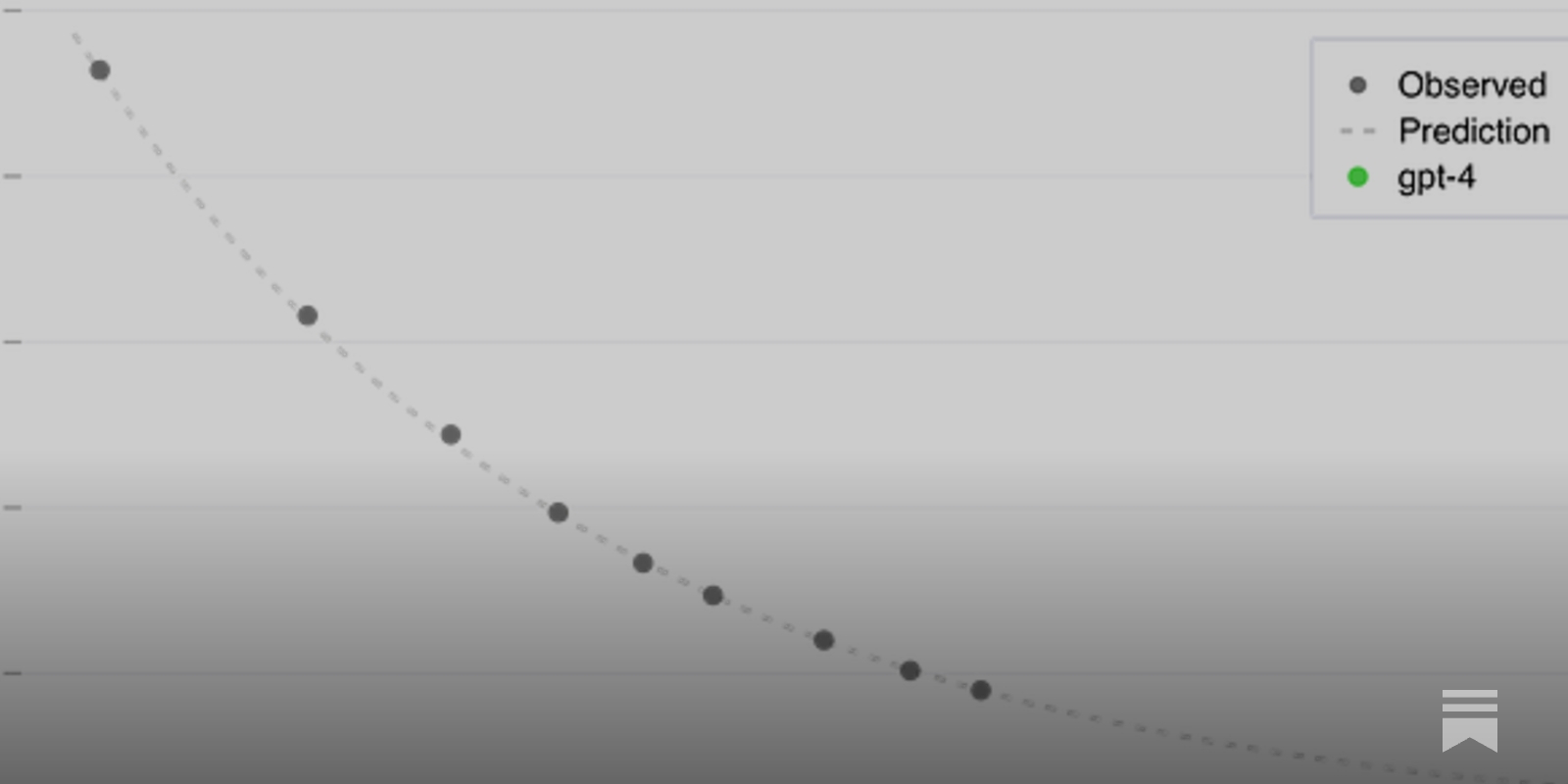

- The article discusses the potential for Artificial General Intelligence (AGI) to be achieved by 2040, based on the scaling of Large Language Models (LLMs) and improvements in performance.

- The debate between the "Believer" and "Skeptic" characters highlights the challenges and potential solutions in scaling LLMs, including the need for exponential increases in data and compute power, and the potential of self-play and synthetic data.

- The "Believer" argues that despite the lack of airtight theoretical explanations, the consistent progress and improvements in performance seen in scaling LLMs suggest that transformative AI could be achieved with further scale-up and advancements in hardware and algorithms.

- The "Skeptic" counters that current benchmarks may not accurately measure true progress towards generality, and that LLMs may not be capable of the kind of insight-based learning and efficient meta-learning seen in human cognition.